What’s in this article

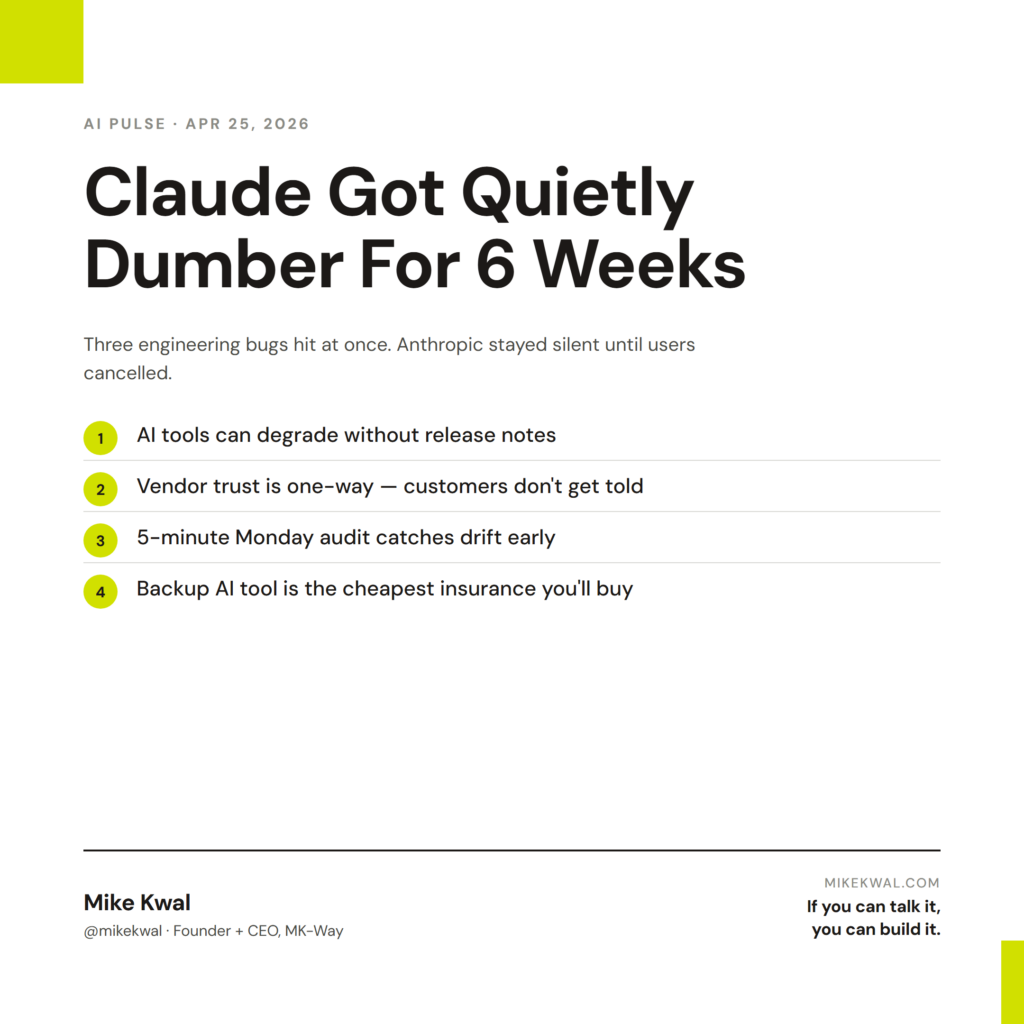

- What broke — three engineering bugs hit Claude Code at once.

- Why Anthropic stayed quiet for six weeks.

- The lesson for designers betting on a single AI tool.

- The 5-step audit checklist I now run on my AI stack.

- How I’d actually use this to protect my client work.

I’m Mike Kwal. I run my whole studio on Claude. So six weeks of silently degraded performance is the kind of news I take seriously. Here’s what happened and what I changed.

What just happened

For roughly six weeks in March and April 2026, Claude Code got quietly dumber. Anthropic confirmed three engineering bugs hit simultaneously:

- Reasoning dropped. A model-tuning change reduced default thinking depth.

- Memory wiped itself each session. Context wasn’t persisting the way it was supposed to.

- A system prompt tweak cost 3% quality. Small in isolation, compounded with the other two issues.

Anthropic didn’t announce it. They didn’t email customers. The behavioral shift only surfaced when heavy users started cancelling subscriptions and posting comparisons publicly. The story broke when Anthropic finally published the post-mortem.

This is a “trust but verify” moment for any designer running a studio on AI tools.

Why this matters for designers

Three things this exposes about working with AI vendors.

AI tools degrade silently. Unlike a software update with release notes, model behavior changes can ship invisibly. The Claude you used last week might not be the Claude you’re using today.

Vendor trust is one-way. Anthropic’s internal communications didn’t reach customers for over a month. That’s not malicious — it’s how big software companies handle internal issues. But the customer experience is “you paid for X and got 0.8X for six weeks.”

Self-auditing is now a designer skill. When the tools you depend on can quietly change underneath you, you need a way to detect it. Not from the vendor. From your own testing.

For designers running client work on AI, this means building a small habit of weekly “is the tool still working as expected” testing. Five minutes a week. Catches degradations early.

My $0.02 — How I’d actually use this

The 5-step audit checklist I now run on my AI stack every Monday morning.

Step 1: Run the same 3-prompt baseline test. I have a saved doc with three prompts I’ve been running on Claude for months. Same prompts, same expected outputs. Every Monday, I run them, eyeball the answers, and rate the output 1-5. Two consecutive low weeks = something changed. Investigate or switch.

Step 2: Check Claude Code on a known project. I have an old client project I’ve shipped. Every Monday I prompt: “Read this codebase. Identify the three most likely places to add a security bug.” I know what the right answer is. If Claude misses it, the model has drifted.

Step 3: Cross-check against ChatGPT. I run the same prompt through GPT-5.5 and compare. If they agree, I trust both. If they diverge sharply, I dig in. Two senior reviewers catching different issues is a sign of strength, not a problem.

Step 4: Check the Anthropic status page. Once a week. Five seconds. Catches platform-level issues that would otherwise look like model issues.

Step 5: Keep a backup tool ready. I have ChatGPT Plus active even when Claude is my primary. If Claude’s quality drops for two weeks, I have a working alternative on day one. The $20/month is the cheapest insurance you’ll ever buy.

That’s a five-minute Monday habit. Catches the next “Anthropic quietly broke Claude” event before it costs me a client deliverable.

The other behavior change: I tell clients what AI tools their build is running on. Not the prompt details — they don’t care. But the high-level stack: “this site is built with Claude Code on Opus 4.7, deployed via Netlify, monitored with Claude Security.” When something breaks, I can explain it. When something improves, I can sell the upgrade.

The bigger lesson: AI tools are vendors, not magic. Treat them like vendors. Run vendor due diligence weekly. Have a backup. Tell your clients which vendors you depend on.

Want the full playbook?

For the full AI tool stack I run my studio on, plus the audit pattern that’s saved me from drift, see my Talk-to-Build Stack.

FAQ

Is Claude back to full quality now?

Anthropic announced the fixes mid-April. Most users report performance is back to baseline. The trust gap takes longer to close.

Should I switch off Claude?

Not over one event. But run the 5-step audit. If you see drift again, you’ll catch it early.

Does this mean ChatGPT is more reliable?

Different vendor, same risk shape. OpenAI has had its own quiet model changes. Run audits on whatever AI you use.

What if I can’t tell the difference between AI outputs?

Build a baseline test now. Three prompts you understand the right answers to. Run them weekly. Trust the data, not the vibe.

Can I get a refund for degraded performance?

Anthropic offered some pro-rated credits. Worth asking customer support if you can document the impact on your work.

Want help applying this?

Four ways to go deeper:

- Build with Builders. Join the Talk-to-Build community to Learn how to Earn money with AI, Download our AI Skills, Advance your business, Learn to build real assets for Website Design & Shopify stores — Gen-AI images, cinematic AI videos, conversational AI office secretaries — that you can sell to SMBs that want the outcomes but don’t have time to learn the skills.

- Done-for-you. MK-Way builds AEO-ready websites and apps for design agencies and founders who want it shipped fast.

- Quick question. DM me on Instagram. I read every message.

- B2B / strategy. Connect on LinkedIn for deeper conversations about AI in design and agency work.

Last updated: May 7, 2026.