What’s in this article

- What DeepSeek V4 ships — frontier-level performance at fraction-of-a-cent pricing.

- Why a 1M token context window matters for designers.

- Where DeepSeek wins over Claude and GPT — and where it doesn’t.

- The bulk-task economics that changed for studios.

- How I’d actually use this on real client volume work.

I’m Mike Kwal. DeepSeek isn’t a tool most designers know about, but it’s quietly the cheapest way to run heavy AI workloads in your studio. V4 makes that math even better.

What just happened

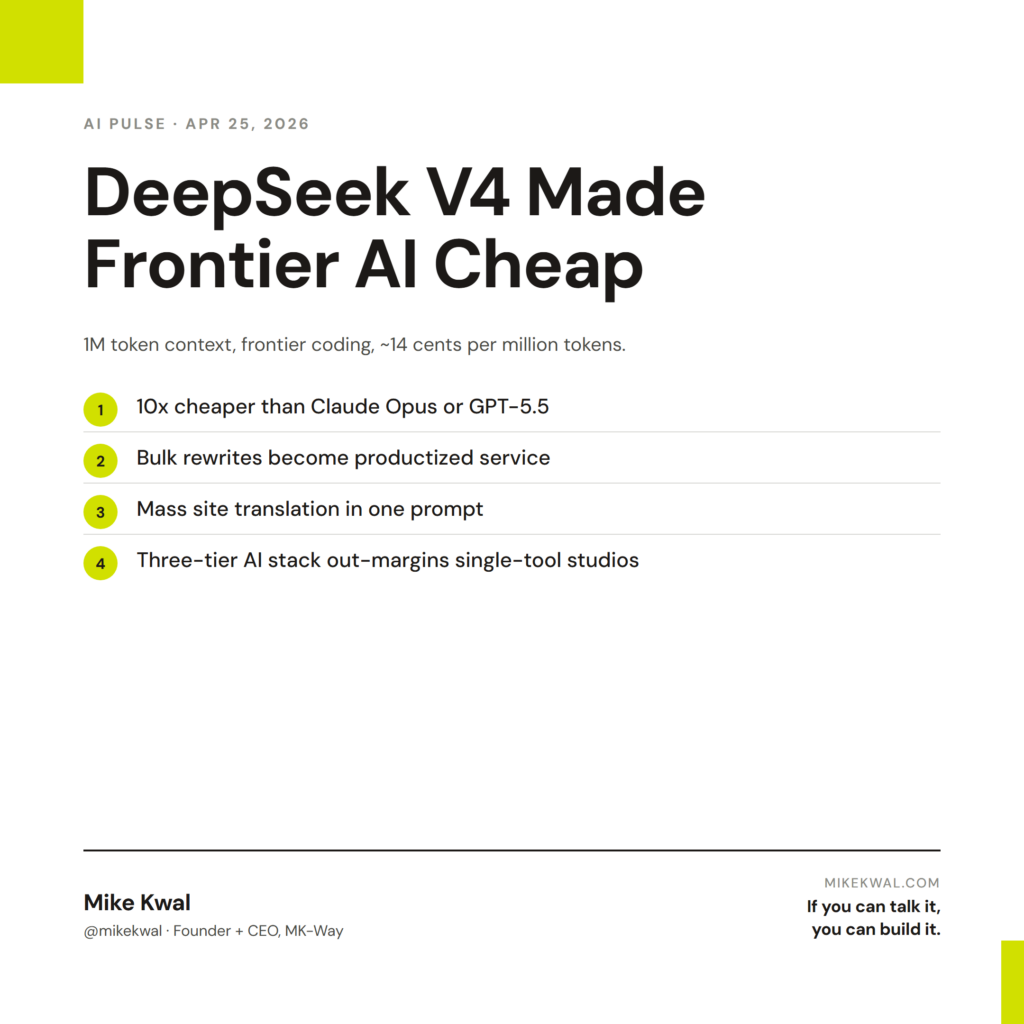

DeepSeek V4 dropped — open-source, available via API. The headline specs:

- Frontier-level coding performance. Close to Claude Opus and GPT-5.5 on most tasks.

- 1 million token context window by default. Feed it your entire codebase or 50 client docs in one prompt.

- ~14 cents per million tokens. Roughly 10x cheaper than GPT-5.5 or Claude Opus.

For builders, this changes the cost of bulk AI work — translation, summarization, content rewrites — from “noticeable line item” to “rounding error.”

Why this matters for designers

Three workflows just got radically cheaper.

Bulk content rewrites. A client with 200 product descriptions, all written badly, needs to be rewritten for AEO. On Claude Opus, that’s $30–50 in API costs. On DeepSeek V4, it’s about $3. You can offer this as a productized service for the first time and still make margin.

Mass-translate sites. A client expanding into Spanish or French markets needs every page translated. DeepSeek V4 with its 1M context window can take the entire site at once and translate consistently. Same job that used to require either a translation agency or a lot of $20/page translator hours.

Big-batch competitive research. Need to read 100 competitor product pages and extract value props? DeepSeek V4 in one prompt. Pennies.

For designers running their own studio overhead, this also matters: any AI workflow that runs on volume is now affordable. Daily AI Pulse summaries, weekly client report drafts, monthly content batches — all cheap enough to automate.

My $0.02 — How I’d actually use this

DeepSeek isn’t my primary tool — Claude is. But it’s now my primary tool for volume work, and that distinction matters.

Here’s the split I run.

Claude Code: hard, project-aware work. Custom client builds, security review, architecture decisions, anything that needs careful reasoning across a single project. Worth the higher per-token cost.

ChatGPT (GPT-5.5): copy and creative work. Hero headlines, blog drafts, ad creative, anything where writing voice matters. Worth the $20/month.

DeepSeek V4: bulk and simple work. Translating content, rewriting product descriptions in batch, summarizing 50 transcripts at once, basic competitive research. Cents per task.

The math:

– Old workflow: rewrite 200 product descriptions on Claude → $50, 30 minutes

– New workflow: rewrite 200 product descriptions on DeepSeek → $3, 8 minutes

Across a year, this saves real money on volume tasks I’d otherwise either skip or eat the margin on.

What I’d not use DeepSeek for: client-facing creative writing, strategic decisions, anything that touches sensitive client data (DeepSeek’s data residency story is less mature than Claude’s or OpenAI’s). Use Claude or GPT for those.

The bigger lesson for designers: AI is becoming a tiered capability, not a single tool. Premium models for high-stakes work. Mid-tier models for daily creative. Cheap models for volume. Studios that run all three tiers will out-margin studios that pay premium prices for everything.

Want the full playbook?

For the full AI tool stack I run my studio on — Claude, ChatGPT, DeepSeek, when to use each — see my Talk-to-Build Stack.

FAQ

How do I access DeepSeek V4?

Through their API at deepseek.com. Setup is similar to OpenAI’s API — get a key, plug it into a tool that supports it.

Is it safe to use for client work?

For non-sensitive bulk tasks (rewrites, translation, summaries), yes. For anything where the data must stay private, check their data policy carefully or use Claude/GPT instead.

Can I use it inside Claude Code or Cursor?

Yes — both support custom model endpoints. Configure DeepSeek as an alternate model and switch when appropriate.

Does it work on long documents?

Yes — 1M token context handles enterprise-size inputs. This is its biggest practical advantage for bulk work.

Is it really frontier-level?

On benchmarks, close. On hard reasoning, slightly behind Claude Opus and GPT-5.5. On 80% of practical tasks, indistinguishable.

Want help applying this?

Four ways to go deeper:

- Build with Builders. Join the Talk-to-Build community to Learn how to Earn money with AI, Download our AI Skills, Advance your business, Learn to build real assets for Website Design & Shopify stores — Gen-AI images, cinematic AI videos, conversational AI office secretaries — that you can sell to SMBs that want the outcomes but don’t have time to learn the skills.

- Done-for-you. MK-Way builds AEO-ready websites and apps for design agencies and founders who want it shipped fast.

- Quick question. DM me on Instagram. I read every message.

- B2B / strategy. Connect on LinkedIn for deeper conversations about AI in design and agency work.

Last updated: May 7, 2026.